The 17 point On Page SEO Check list

Search engine optimization is a technique that helps search engines to find and rank your site in response to a search query. It has two main parts – on-page optimization & off-page optimization. In this blog we are discussing about various on-page factors and points to remember while optimizing them.

On-page optimization, in a nutshell, refers to all the measures that can be taken directly within the website to improve its ranking in search engines, including title, Meta Descriptions, hx Tags, adding Schema etc. It is a foundational part of the overall SEO strategy implementation that focuses on indexation, crawl ability and website relevancy.

Off-page, on the other hand, refers to all the things that you can do directly OFF your website to help you rank higher, such as social networking, PR submission, and forum & guest blog marketing.

Before we move on, if you need any kind of help with SEO, you should definitely reach out to us. Check out our team. Let’s talk.

For the purpose of giving beginners a reference guide, we have done detailed research on on-page factors and summarized some of the most important optimization techniques. All points are described below in detail:

1) Keyword Research

Keyword research is an important part of SEO, as these can decide the character of your visitor types. Undertaking effective keyword research plays a critical role in bringing relevant visitors to your site.

Find out all the relevant converting keywords related to your niche after studying the business, and plan a keyword theme, including broad and long term, which can drive targeted traffic to your website. You can use tools like Google Keyword Planner, Keyword tool, Google trends etc. to find out converting search terms.

Here are the key points to remember while doing keyword research for your website:

- Study business and niche to figure out the keyword theme

- Do keyword research by considering search volume and competition using various tools.

- Understand the search trends in business niche and revise the keyword list

- Collect a list of related keywords and synonyms within the business theme

- Conduct Google Search for search behavior in target market and after considering Google’s suggestions, finalize the list

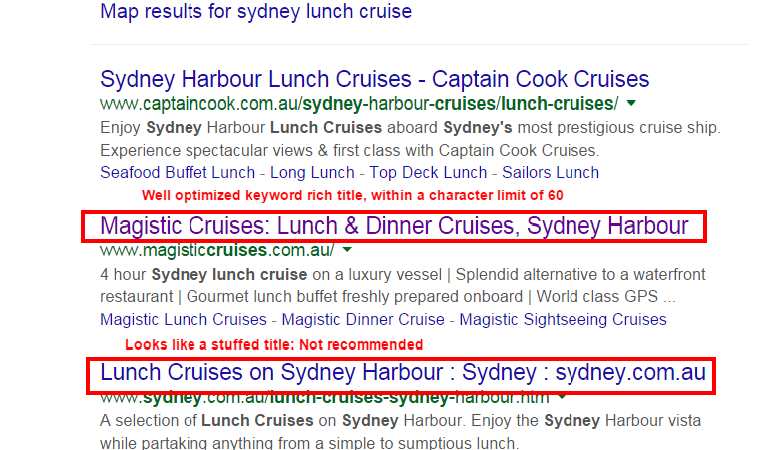

2) Title Tag

Title tags—technically called title elements—defines the title of a webpage. Title tags are often used in search engine results page to display preview snippets for a given page, and are important both for SEO and social sharing.

A well written title can significantly improve your click-through-rate and it is extremely important for both users and search engines that it contains the keyword they are searching for. Here are the standards for a well optimized title tag:

- Title tag should accurately reflect the topic of the page

- It should be compelling for a user to click

- Tag should include main keyword of the page

- Site branding should go last

- Should not exceed the character limit of 60

- Title tag should be unique for every page

- Include the location where the business targets

Refer the below screenshot and compare the keyword rich, well optimized page title with un-optimized title tag.

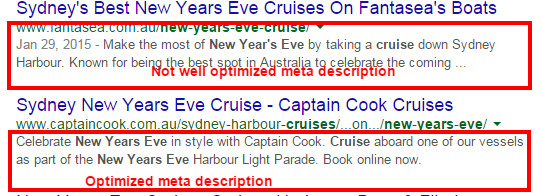

3) Meta Description Tag

Meta descriptions are HTML attributes that provide a brief overview of the page’s subject matter. Though search engines do not consider Meta description as a ranking factor, it has an important role in increasing the conversion rates of your website.

Therefore, it should be a compelling description which a searcher will click without hesitation. Usually, it shouldn’t be more than 160 words, with relevant keywords inserted into the sentence in a brilliant way.

An ideal Meta description tag should:

- Describe the page content and compel the user to visit the page

- It’s best to keep it within a character limit of 150 – 160

- Optimized with main keywords and secondary keywords and location if focusing on location targeting.

- Compelling for a user to click

- Avoid duplicate Meta description for different pages

Refer the screenshot below for understanding the difference between optimized and un-optimized Meta description. The first Meta description truncates before completing the sentence, but in the second example, the optimized Meta description displays the full message without truncating.

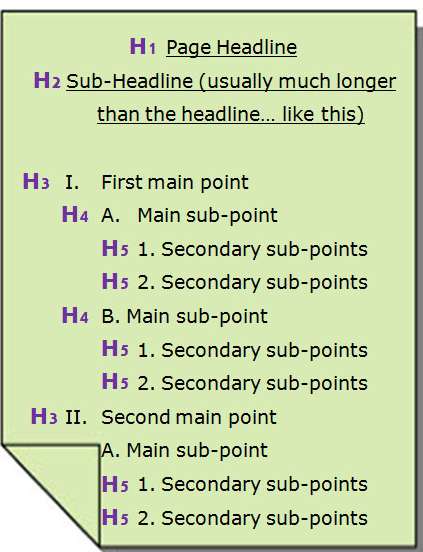

4) Hx Tags:

H1 tag is the first and most important heading in the content of a page. It tells what the content of the page is going to be about, so search engines value its a lot.

There are six different heading tags: H1, H2, H3, H4, H5, and H6. Collectively these are referred to as Hx tags. They inform the search engine how the content is structured for readability.

For e.g.: H1 tags indicates that the page title is followed by sub headings, which can be noted with H2, H3 tags etc. Secondary sub headings can be noted with H4, H5 tags. A well optimized hx tag should be according to the following standards:

- Include target keywords in H1 & secondary keywords in other Hx tags

- H1 tag must be unique for every page.

- H1 should explain what the page is about & should be similar to title but not exact.

- Appealing to the user and make the user take desired action

- H2, H3 tags are used properly with secondary keywords to denote the subsections of the page.

- H4, H5, H6 follows the hierarchical structure by considering the keyword theme.

From the below screenshot you can see how hx tags can be utilized in a proper way.

5) Images

Images are a strong visual part of a webpage. Alt text is used to show relevant information about an image to search engines, while Image title is a brief description about the visual content and the context of an image.

If optimized properly, they can drive organic traffic from Google image search. Both these information helps search engines to understand the image better.

It also increases the keyword relevance of a page if used in a natural way. Consider the following while optimizing your alt tags:

- Alt tag should give an accurate description of the image

- It should contain keywords relevant to the image and content keyword theme.

- Character length of alt tag should not exceeds 150

- Title tag is mandatory for image optimization & It should describe the image more accurately

- Alt attribute is mandatory for every image that you need to index in search engines

- Keep images in low bytes as is recommended (After the recent Panda update, the loading time of pages has become a vital part of SEO ranking )

- Try to include keywords in filenames, as it helps Search Engine to detect relevancy

- Images should be a minimum of 320 pixels and a maximum of 1280 pixels on one length

6) Anchor Text

Anchor texts are text parts on a website that has a URL attached to it. Search engines follow the anchor (link) on a page to reach another page.

It uses the text to determine the subject matter of the linked-to document. This helps search engines in understanding the context of a page within the website, and to boost your site ranking for relevant searches. For e.g. consider the following sentence:

I prefer BBC World News than CNN because it provides more International news and seems to be more balanced.

In the above example, the links would tell the search engines that when users search for “CNN”, it thinks that http://www.cnn.com is a relevant site for the term “CNN” and that http://www.bbc.co.uk is relevant to “BBC world news.” If many sites link that particular page/link as relevant for a given set of terms, that page can manage to rank well for these terms.

Code sample

<a href=”http://www.sample.com”>Sample Anchor Text</a>

A well optimized anchor text should:

- Include both types of keywords – primary and secondary – according to the context of the page.

- Utilize dofollow and nofollow in a proper ratio in each page.

- Don’t include too many exact match anchor texts, otherwise it will be counted as stuffed.

7) Content Quality & Optimization

Your content may be great but it won’t help your marketing efforts if it is not optimized for search engines. The process of making attractive content to search engines is called content optimization.

Optimizing a content is not just inserting specific keywords a number of times in order to increase its keyword density, but making the content well-written, organized, useful, relevant and social-ready for website users by optimizing it with the right elements.

Here are a few important things to consider while optimizing your content:

- Make sure all copies passed Copy-scape test/ Duplicate content detector test

- Make it informative to users

- Utilize all primary and secondary keywords (long term keywords) while optimizing your webpage content.

- While developing content, consider the keyword theme for the website and categories

- Write to provide value for users/clients

- Content on every page should be unique

- Keep minimum word count on individual pages, since Google panda algorithm penalizes duplicate thin content on webpages.

8) Keyword Density

Keyword density is considered as an important on-page metric in on-page SEO. It’s the percentage of times a keyword appears on a web page in comparison with the total number of words on that page. Optimizing your website content with relevant and targeted keywords are mandatory for the success of a SEO campaign.

Here are the important things to consider while optimizing webpage for keyword density:

- Primary keyword should appear at the starting sentence of the first paragraph

- Keywords density of any keyword shouldn’t exceed more than 3%

- Primary keyword must have a high keyword density compared to other phrases

- Various keyword phrases should be present instead of concentrating only on one or two keywords

- Keywords in the content should be used in a natural manner

- No keyword density should exceed more than 3%

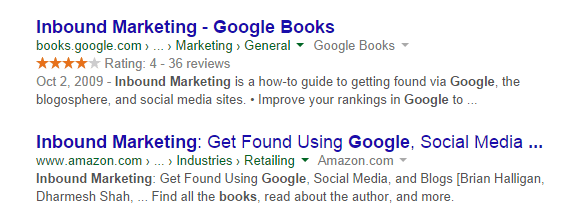

9) Schema markup

Schema is a type of micro data that makes it easier for search engines to interpret the information on a webpage more effectively, and serve the relevant search results based on user queries. By adding schema, you can alter the way your page is displayed in SERP by highlighting rich snippets that are displayed beneath the page.

Consider the following things while tagging schema to your content:

- Tag all possible content with schema, use the http://schema.org for finding relevant data types according to the type of contents like restaurant, event, organization, creative work etc.

- Schema should be added more creatively and compel a user to click on the link

- Check the added schema in webmaster tool and fix errors if any.

- If there is no relevant schema type present related to your content, you can make use of itemproperty additional type.

In the below screenshot, review schema is highlighted, which has stars and a rating scale with total number of reviews. Adding schema creatively helps to improve Click-Through-Rate. You can see the difference from screenshots:

10) Navigation and User Friendliness

Having a clear and clutter-free navigation is the best thing you can do to make your website friendly for your visitors. Not over complicating things and configuring navigation equally on each page will greatly facilitate easy browsing of the site.

Having clear calls to action with a user-friendly navigation will help make it easier to drive your visitors to goals, and prevent frustration for visitors who have to take unwanted steps for completing a purchase or reach a destination.

Remember the followings things while dealing with Navigation & User friendliness of your site:

- Site navigation should be easy for users to reach all content on the website.

- It should help search engines find all important content via crawling

- Important content should not be presented by technologies like Ajax or called dynamically

- All navigational links should be visible at least once when checked with JavaScript turned off

- Url structure should be in SEO-friendly format

- Should be with clear call to action

- Mobile responsive version is recommended

11) Google Page Speed Insights Tool

Page speed indicates how fast your webpage loads. Google has indicated site speed as one of the signals used by its algorithm to rank pages.

In the recent Panda update, it was announced that the loading time of pages has become an important part of SEO ranking. So it is recommended to optimize page speed using Google page speed insight tool.

Pages with a longer load time tend to have higher bounce rates and lower average time on-page. Longer load times have also been shown to negatively affect conversions.

Consider the following factors while optimizing your page speed:

- All the errors should be fixed by the designer team

- Check if the page has a good score on Google page speed insights tool after fixing all the recommendations by Google. The score should be >85 for both mobile and desktop versions.

12) No Index Tag

No index tag prevents search engines from crawling a web page. This tag controls access to site on a page-by-page basis.

This tag should be utilized on ecommerce sites with duplicate content issues, as this tag will block access to that particular page. To prevent the search engine crawlers from indexing a page on your site, place the following:

Meta tag into the <head> section of your page.

<Meta name=”robots” content=”noindex”>

This tag is used for preventing Google crawlers from indexing a page:

<Meta name=”Googlebot” content=”noindex”>

Keep in mind these points while adding no index tag

- Check if no index tag is added on correct pages

- Check if all test pages are blocked by no index tags

13) Canonical Tag & 301 Redirections

Canonical tag is used when duplicate content problem occurs on a website. In e-commerce sites, it is common for more than two URLs to be represented a single page, this cause a duplicate content issue. You can solve this issue by using a canonical tag to indicate to the search engine which is preferred page or original one.

Syntax of canonical tag is:

<link rel=”canonical” href=”url of original page” />

Redirection is the process of forwarding one URL to another. You can either permanently redirect old URLs to new one using 301 redirections or temporarily redirect pages using 302 redirections. If a single page can be accessed using more than one URL, you can redirect these unwanted URLs to original one using 301.

14) Sitemap.xml

Xml sitemap is a file where you can list webpages of a website and indicate to Google about the organization of your webpages. It allows us to include additional information about each URLs, such as when the page was last updated, how often the page changes, and how important the page is in relation to other pages on your website.

Xml sitemaps helps to improve spider-ability and ensures that all the important pages on your site are crawled and indexed. After creating xml site, we can submit it to search engines via webmaster Tool so that it will get indexed fast.

Here are the points to remember while creating sitemap:

- Check if xml sitemap is created sucessfully

- Check if all pages are included

- Check if last updated, priority, change frequency are updated properly

- Check if a sitemap is given for website visitors

- Check if the xml sitemap is submitted on webmaster accounts

15) Robots.txt

Robots.txt file gives search engines directions about what content should be crawled on a website. When a search engine bot visits a website, it first checks for the Robots.txt file.

You can either disallow or allow particular directories with the help of robots.txt. Ensure whether you have verified the following points while working the robot.txt file:

- Check whether robots.txt file is updated correctly

- Check whether any required pages/directories are blocked

- Check whether any content that needs to be blocked has been allowed

16) .htaccess

A .htaccess file is used to configure the details of your website without altering the server configuration files. It is used for creating custom error pages and redirection.

When a htaccess file is placed in a directory, which is in turn ‘loaded via the Apache Web Server’, then the htaccess file is detected and executed by the Apache Web Server software.

- Check if proper rule is present on .htaccess file to show only one version of the URL (with or without www)

- Check if all redirections on the page are relevant and compare it with previous ones

- Check if new site revamp required us to add/change any redirections

17) Webmaster & Analytic Code

It gives webmasters easy access to Google webmaster & analytic data that can be used to make decisions for reviewing SEO strategy.

Webmaster account gives:

- Data about top visited pages, top keywords

- Details about crawling and indexing activity of site

- Data regarding latest internal and incoming links

- Notifications about crawl errors etc

Analytic account is a free tool that you can use to track information about the way visitors of your site engage with it. It gives the following data:

- Number of visit to pages, their sources, behavior, time spend etc

- Comparison between different pages and their performances

- Visitor segmentation, with which it’s easy to know how many visitors have increased after an SEO campaign.

All these are good reasons to add webmaster and analytic account to your website. Here are a few things to consider while dealing with webmaster & analytic accounts:

- Make sure you have added Analytic and Webmasters tracking code on all the pages of your website

- Monitor keywords that are generating traffic in your keywords list, and ensure you are optimizing the site for them.

- Analyze site traffic against algorithm updates.

- Finding & fixing crawl errors reported on the webmaster account

- Check if the site is penalized for any manual penalty from Google

Conclusion

On-page optimization is often called the core foundation for success of any SEO campaign. Its SEO durability is longer when compared to other off-page techniques, since all optimization are in accordance with search engine rules.

So, give them a try. They are actually pretty easy to implement and worthy with results.

As always, feel free to reach out to us if you need any help with SEO. That’s all for now, cheers!